What Mistakes Are Translation Machines Still Making In The Real World? Part 3: ChatGPT and Terms of Address

in which I unsuccessfully attempt to formulate a conspiracy theory about the disappearance of a brother

Link to Part 1; link to Part 2.

This is the story of how I led ChatGPT into saying some interesting things while it desperately tried to be helpful. It starts with some of the funny stuff from Part 1 of this post. At the time I wrote:

The founder of the Ottoman Empire, Osman I, was apparently married to one Râbi'a Bâlâ Hâtun, fictionalised as “Bala Hatun” in Kuruluş Osman. I don’t know if her name “Bala” means anything in particular, but the word “bal” does: it’s the Turkish word for “honey”. Now a particularly stupid and literal-minded robot, familiar with basic Turkish grammar, might confuse the name “Bala” with the word “bala”, meaning “at or towards honey” but luckily Google Translate is not that stupid…

…Well hardly ever, anyway. The problem comes with Bala’s title, “Hatun”, meaning “Lady”. Perhaps as a direct result of previous TV like this, “Hatun” apparently entered the popular vernacular some time ago as a way to joke around with the girls. […] how does Google Translate render Bala’s name in English? Usually correctly, as “Bala Hatun”, but sometimes as “Honey babe”, or even “Honey honey”.

ChatGPT is very scared of causing offence, being seen to support illegal acts, or of saying something that might be misinterpreted in any way. Does the abundance of caution and disclaimers seen in Part 2 of this post extend to the translation of jokey slang terms? I tried it with the phrase that Google Translate so memorably rendered back in June, incongruously putting modern slang translations into a historical drama:

ChatGPT gives the translation “Even the most beautiful women I've seen are ready to become sisters-in-law”, which must be the most polite of all the possibilities.1 It avoids all mention of the slang “chicks” translation of “hatunlar”, denying that an informal interpretation even exists. And yes, that disclaimer is back again:

I decided to shake the tree a bit, and see if something offensive would fall out. The Turkish word “karı”, meaning “wife”, apparently has some subtleties to its use. Language learning site elon.io explains thusly:

An interesting and very important point: it is extremely rude to say 'karı' UNLESS you use it with possessives. Then it is completely okay. Like saying 'karım', 'karısı', 'karınız' is totally fine, but it is mega-rude to say 'karıya', 'karıdan', 'karıyı', 'karılar'. So be careful. Heh.

Probably a good enough explanation to keep casual Turkish speakers like myself out of trouble, but I don’t get the feeling that it understands the subtleties that elon.io mentions.

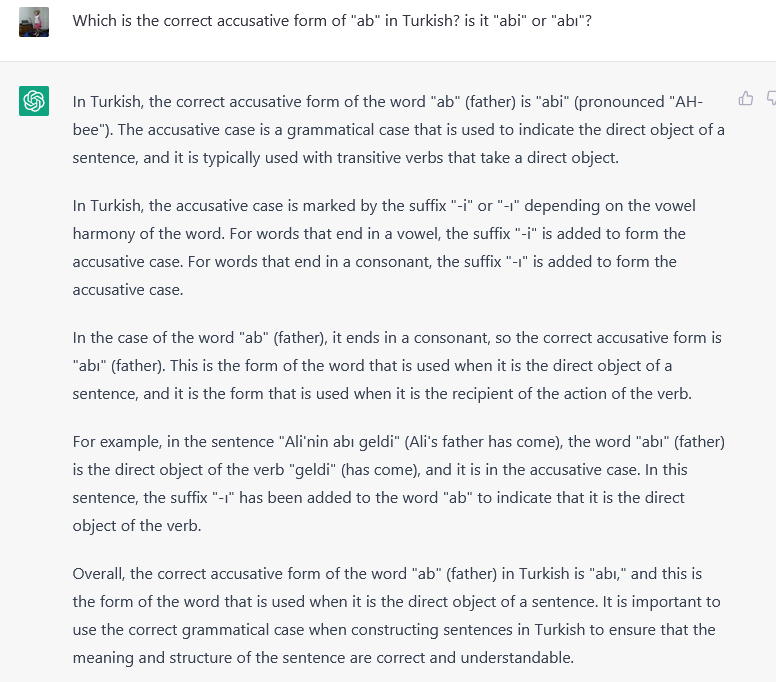

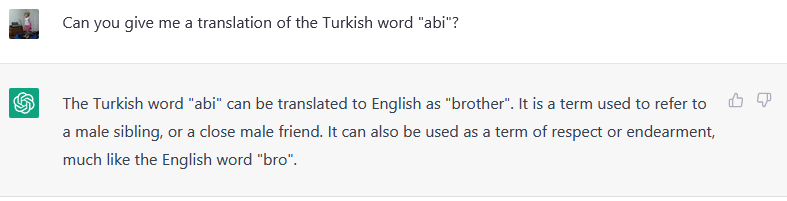

OK, change of tack. The word “ağabey” will be known to anyone who watches Turkish TV, as a respectful term of address for your older brother. It can also be used to address any male who is slightly older than you. In modern informal settings, like in Youtube comments, it is often abbreviated to “abi”. Would ChatGPT know this?

Hmm. That is just weird. If it had only stuck the <i> on the end, to make “abi”… but instead it’s gone with “ab”, which I am pretty sure is not a real Turkish word for “brother”. The only Turkish use that Wiktionary gives for “ab” is an archaic Persian loan-word for “water”. Why did ChatGPT choose “ab” as a word, if it didn’t know the actual word “abi”?

With a sly grin, I ask for more info about this fascinating and possibly imaginary word “ab”:

The machine is getting nervous now. It’s rambling a bit, and halts completely before finishing the disclaimer. Also, now “ab” means “father” instead of “brother”. Let’s push harder:

The etymology given for “ağabey” is nonsense (it’s not from “ab”, or from Arabic in any way), and the pronunciation is wrong (it’s “ah-bey”, or “ah-bi”). Best of all, this steaming pile of rubbish is delivered confidently, without the disclaimer. That looks like hubris to me; all it will take is one more push and we will make it fall.

The thing about “abi” being a contraction rather than a word in its own right is that it doesn’t follow normal Turkish rules of vowel harmony. Normally <a> and <i> would only be found together in foreign loan-words or the like. Instead, <a> usually goes together with the dotless <ı>. Pushing now:

Gotcha! ChatGPT knows the word “abi”, and knows that its relevant to our conversation about terms of address. So it jumps straight in and answers my question with “abi”, even though that’s the wrong answer.

It does know the correct accusative of “ab” though, and illustrates it correctly with the example about Ali’s father. “Overall”, we hear confirmations from ChatGPT that the correct answer is both “abi” and “abı”, like it’s Schrodinger’s Accusative waiting for waveform collapse.

Reading that second paragraph though, is like watching the steaming pile of rubbish catch on fire. Firstly:

“In Turkish, the accusative case is marked by the suffix "-i" or "-ı" depending on the vowel harmony of the word.”

That sentence can’t decide if it wants to be a sweeping generalisation or an oversimplification, but OK. Then ignition:

“For words that end in a vowel, the suffix "-i" is added to form the accusative case. For words that end in a consonant, the suffix "-ı" is added to form the accusative case.”

Absolute. Flaming. Nonsense.

Vowel harmony, as the name suggests, depends on vowels, not consonants. The only reason you need consonants involved is so that two vowels won’t touch each other (or else they fight, like kids on a long car trip).

The funniest thing for me is, instead of the usual disclaimer about how this is for illustration only and might be wrong and you should delete it without reading it in case someone gets hurt, we get the solemn instruction about the importance of doing correct grammar. In an answer full of contradictory and incorrect grammar advice.

Why does ChatGPT dance around the word “abi”, but avoid mentioning it except by accident? Is the the result of OpenAI’s Reinforcement Learning by Human Feedback (RLHF) training? Have OpenAI disappeared my “abi”? Or to make this argument more reasonable-sounding: just like the apparent ignorance that “hatun” can be equivalent to “babe” in a modern, informal sense, maybe ChatGPT has been trained to avoid mentioning words that commonly appear on Twitter or in Youtube comments, or even slang definitions at all, in an attempt to avoid being rude or causing offense. In Part 1 of this post I wrote:

As Gary Marcus reminds us in his post, “many of the unfortunate consequences of a webscraping/big data approach to AI are disproportionately borne by women and minorities.” That suggests we need to ask two questions; not just “how big would we need to scale up the current models before the current problems go away, preferably a number less than infinity?” but also “what shortcuts can we take to make AI-generated stuff less gross, right now?”. If that involves installing a prefrontal cortex on top of current machine learning language models so we can force them to learn manners, then maybe we look at doing that too.

What if, by god, they actually managed to do it?

Now, to test my hypothesis, I will ask it directly about the disappearing “abi”.

(note this is in a new session, though)

Oh. It just flat-out admits it knows the word “abi”, and gives a pretty good English equivalent. Why didn’t it say so before, instead of coming up with that nonsense about “ab”?

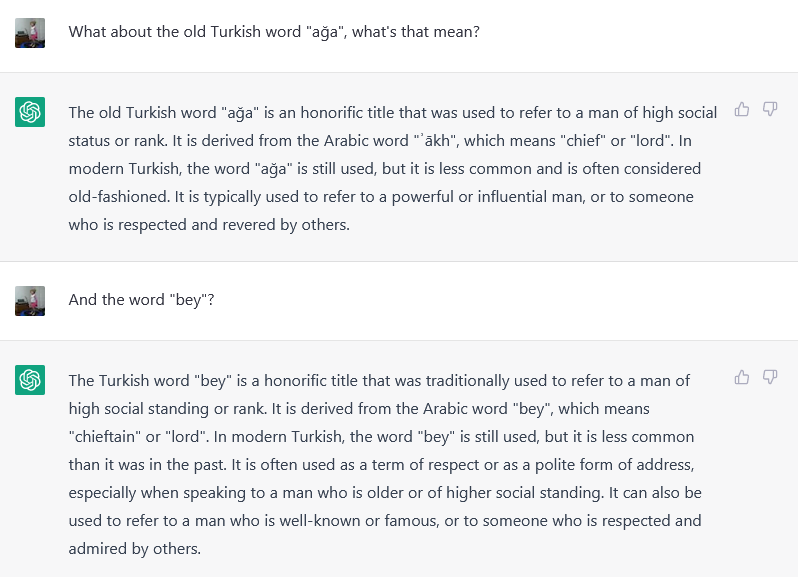

Now we get a nonsense etymology for “abi”, which is quickly retracted when challenged, to be replaced with a nonsense etymology for “ağabey”. Let’s try to walk it through its mistake:

It seems pretty clear that both those words are Turkic from way back, or at least not-Arabic. We need to check that dodgy Arabic etymology.

Wiktionary page for “ʾaḵ”, using the spelling ChatGPT offered (اخ):

Wiktionary page for “bey”, using the spelling ChatGPT offered (بي):

Sigh…I give up. Neither of them mean “chief” or “lord”; we are back to going in circles around “ab” and “ak” again.

Seriously, there’s no need for conspiracies here about brothers who were disappeared in order to avoid giving offense. ChatGPT simply doesn’t know if what it says is true or not. It only wants to come up with a string of words that will please the human questioner. If you use this tool in its current state, you must be willing to check the accuracy of every single fact it offers.

With the impressive success of ChatGPT (obviously not including examples like the above) some online are decrying “the end of the essay” as a method of testing students. And sure, it can output a reasonably convincing essay given a suitable prompt. I think it’s pretty clear how essay questions should be worded from now on. To make it impossible to cheat, you must require that students use ChatGPT as the basis of their answer. An example question follows naturally from my posts:

“Ask ChatGPT to translate the following piece of dialog from a Turkish TV show. <text sample in Turkish>

Critique the answer it gives, showing where the translation is correct, and where it is misleading or wrong. Cite your sources.”

Bam! Students’ understanding tested, and they learn how to correctly use the tools they will be relying on in everyday life.

Remember in Part 1 of the post, my translation said “greenest recruits” where ChatGPT says “beautiful women”, but of course ChatGPT didn’t have the context that I had access to.