What Mistakes Are Translation Machines Still Making In The Real World? Part 2: ChatGPT Edition

in which ChatGPT tries very hard to provide helpful translations of obscure Islamic terms, while not saying anything offensive, illegal, or which might be misinterpreted in any way

Link to Part 1; link to Part 3.

Like a great many other people this week, I jumped into the hot-seat to talk to ChatGPT, the latest language model from OpenAI. It’s in research preview mode at the moment, which means anyone can have a try.

I have to say I was very impressed with what this language model can do, and it makes me excited for the future of machine translation. Its not perfect; as many others have noted it can very confidently serve you up a giant flaming pile of nonsense (more on this in the next post). It is, however, a significant advance over the translation machines I was discussing (and sometimes laughing at) in Part 1 of this post, only six months ago:

The argument that interests me is whether you need to deliberately build an understanding of the “meaning” of things into a translation machine, or if something like “meaning” will develop naturally if you simply scale the current machine’s brain big enough and fill it with more examples. I tend to think the second one might be true, but I accept that there could be some specific problems where in order for “understanding” to develop, you would need to scale the language model to be infinitely large (or at least so large that noone could afford to pay the electricity bill). In that case, you might need to install a module specifically designed to solve a problem like “meaning” more efficiently, like the way that computers have graphics cards to do all that computationally heavy 3D stuff and take the load off the CPU.

So yeah, I don’t know the answer and will leave it up to more knowledgeable people to discuss. But I will give some examples of things that translation robots currently fail at. Some of them are easy, and just require you give the language model a bigger Turkish dictionary to read. Some of them are harder, and will need time to solve. Some of them are just funny, and the machines don’t (yet) understand why.

Which problem (not necessarily one that I can identify) will be the last one that a translation robot learns to solve, before it attains perfection?

Machine Transcription of Subtitles (this bit isn’t directly about ChatGPT)

Now that first post discussed both transcription and translation, as performed by whatever robots Google uses for its automatic Youtube subtitling service. ChatGPT is obviously text input only for now, so transcription isn’t relevant. I need to talk about transcription first though, to set the scene for my chat with ChatGPT.

Compared to my amateur abilities, Youtube’s transcription machines are very, very good. They are far better than I am at listening to a stream of Turkish, and writing it down correctly. I have to slow down the videos, listen to them repeatedly, get prompted by Google’s attempt at transcription, and have multiple attempts at writing due to forgetting stuff before I can finish writing a sentence. Most of the free subtitles available online these days are machine-transcribed, with various levels of human curation.

Part of the success of machine transcription must be due to the Turkish language itself. Turkish had its alphabet, writing system and much of its vocabulary completely overhauled less than a century ago, and so Turkish spelling is beautifully sensible and predictable compared to English. If you try hard enough though, you can force transcription errors, like in this scene from Kuruluş: Osman (ATV 2022).1

This scene has everything that could possibly make it harder for the transcription machine. It’s set outdoors (and it’s possibly windy), there are multiple people shouting at once, there is music in the background, and there are a bunch of archaic words and unusual Arabic/Islamic vocabulary. It’s amazing to me that the machine performs as well as it does; I can hardly understand anything in the chorused replies. Here is the transcript; each line of the Google transcript is followed by my corrected version, with any differences in bold; then my own translation after that (If you can’t be bothered trying to read, don’t worry; I’ll summarise after):

24:53 Alplar! Üçler, yediler, kırklar aşkına!

Alplar! Üçler, yediler, kırklar aşkına!

Alps! For the sake of the Three, the Seven, the Forty!25:03 Azaya var mısınız? Varız!

Gazaya var mısınız? Varız!

Are you ready for Holy War? We are!25:08 Azaya var mısınız? Varız! Aza ne içindir?

Gazaya var mısınız? Varız! Gaza ne ucundur?

Are you ready for Holy War? We are! What is the goal of war?25:14 Rızayı hak içindir. Gazi olmaya var mısınız? Elhamdülillah.

Gazayı hak ucundur.2 Gazi olmaya var mısınız? Elhamdülillah.

War is God’s holy truth. Are you ready to become veterans? Elhamdülillah.25:20 Şahadet şerbetini içmeye var mısınız? Allah-u Ekber! Allah-u Ekber!

Şehadet şerbetini içmeye var mısınız? Allah-u Ekber! Allah-u Ekber!

Are you ready to drink the liquor of martyrdom? Allah-u Ekber! Allah-u Ekber!

Here is the tl;dr. There is a simple spelling error in “şehadet”,3 that could be fixed with a better dictionary. Ignoring “içindir/ucundur” for now, the rest of the mistakes are all because the model doesn't know the unusual word "gaza". Another easy fix, right? Just teach it what "gaza" means?

There’s more to it than that. “Gaza” is from an old Arabic word غَزَا (ḡazā, “to raid”), and in the Turkish example above it means “Holy war” (i.e. Muslims fighting against non-Muslims). Even though its reasonably well understood in Turkish (it’s used in a lot of historical dramas) it’s a bit obscure in English; for example there is no English Wiktionary page for the Turkish word “gaza”, and so Google has apparently never learned it. It chooses the word “aza” instead, which makes no sense, but at least it recognises it as a word. Hilariously, “aza” apparently means “member”, including in the sense of “body part”. Trust Google to sneak in a dick joke whenever possible.

However, the speech above also features a very similar word related to “gaza”, which is “gazi”; the word for a veteran of a Holy war. Wiktionary says this is actually a word in English, albeit with the alternative spelling “ghazi”. This publication’s namesake Ertuğrul is even given the title “Gazi” on his Wikipedia page. Hence, this is a known word for Google.

Here’s the interesting bit for me: if the transcription robot can hear the <g> in “gazi”, why can’t hear the <g> in “gaza”? It hears the sound “gaza” in very similar contexts (initial position in similar sentences) four times in a row, but it can’t transcribe it correctly. However, it gets the dictionary word “gazi” right first time, in a very similar context. If it can do this, and it can also pick out words mostly correctly from a chorus of Alps speaking in unison on a windy hillside with music in the background, then is it deliberately disbelieving its own sensory input when it comes to words it hasn’t encountered before? Does it ever hold the sound “gaza” in its working memory, correctly interpreted as such; then upon finding it doesn’t match to any known words, assumes it misheard instead?

This is why I think the above must be a hard problem for translation machines. Since words appear in common usage before they appear in dictionaries, machines will regularly be confronted with brand new words that they’ve never seen before. How many generations of language model will we have to go through before a new word can be correctly classified as such by the machine that first encounters it, rather than the alternative above which is to assume it misheard? How long until a chatbot can understand when to coin a new word, if its internal dictionary is lacking the words it needs to express something? Will that new word sound natural to the first human that hears it, or will that language model instantly fail the Turing test?

The answer to the “how long” questions above could well be “ChatGPT already does this now”. But if my understanding of how language models are trained is correct, i.e. they don’t understand “meaning” but instead repeat words based on how frequently they appear together in training data, then the language models could really struggle with adding something truly new.

Here’s a funny thing though; while writing this post I pretty much made the exact error I’m accusing the Youtube transcription robot of, i.e. ignoring my own ears and pattern-matching to something reasonably convincing but incorrect.

It was the “içindir/ucundur” thing. Google heard it as “içindir”, which actually makes sense in Turkish. “Ne içindir?” means “what is it for?”, while “ne ucundur?” is more like “what is the point of it?”. Different enough in pronunciation that I can tell them apart, but just similar enough that I read the Google transcription, said “yeah that looks fine” and moved on. In the back of my mind though, it must have been bothering me, and I went back to have another listen, and corrected myself.

So what does sharing errors with humans mean for language models? If they are already making human-like errors, maybe they are closer to perfection that we realise…

My Conversation With ChatGPT

So the other day I threw a transcription of the Turkish TV dialog above into ChatGPT and asked for a translation. It really did feel like a conversation - I felt a strange urge to thank it for its time at the end. I’ll reprint the interesting bits as I comment on each.

Note that at first I went very easy on the poor machine, since its had enough people trying very hard to break it4 this week. I asked nice, leading questions and generally tried to give it the information I thought it might need to give a good answer. And it did very well!

In the next post though I did ask a few trickier ones, following up on things it seemed unsure of, and managed to get it to directly contradict itself within a single answer (aren’t robots supposed to explode when that happens?).

Here’s the translation I was given for the dialog from Kuruluş: Osman. You can see how I tried to fix up Google’s transcription in my input, except for “içindir/ucundur”.5 I also labeled the speakers at the start of each line in case that helped with comprehension.

The first thing to notice is the arse-covering disclaimer at the end, that ChatGPT repeats constantly throughout the whole conversation. It’s perfectly happy to admit that using one of its translations in the real world might start World War 3. It also spouts disclaimers profusely when it can’t answer a question. It does this so often though, that I notice the glaring absence of a very necessary similar disclaimer that it should be giving whenever it can confidently answer a question: “Note: Every word I have just said to you may be complete bollocks and I wouldn’t even know. I’m a language model, not a truth model!”

Lets ignore that and look at the actual translation. Wow, ChatGPT knows what “gaza” means! It gets all four instances correct, for the maximum four points, pulling ahead of Google Translate and DeepL, which score nil points and 1.5 points respectively.6 Google gains half a point though, for translating "Hak" as "Right", whereas ChatGPT gets a full point for appreciating that "Hak" ("Truth") is also one of the 99 names of God. DeepL, slack-jawed, returns "Hak" unchanged, remaining in second place.

I decide to ask a few comprehension questions.

Not bad at all! No disclaimer, so it must be feeling confident in its translation. Let’s try something more difficult:

OK this was a particularly obscure one; it had actually taken me quite a while to track down an answer. In the first lines of the Turkish dialogue, “for the sake of the Three, the Seven, and the Forty” refers to the Saints of the Unknown (رجال الغيب "rijal al-ghayb"),7 a fascinating and secretive hierarchy who keep the world running according to God’s divine will. Some of the Sufi sects ascribe them with magical powers: according to this site these men can “can see remotely or travel through time and space in the service of Allah”, which would make them some sort of Islamic Doctor Who.

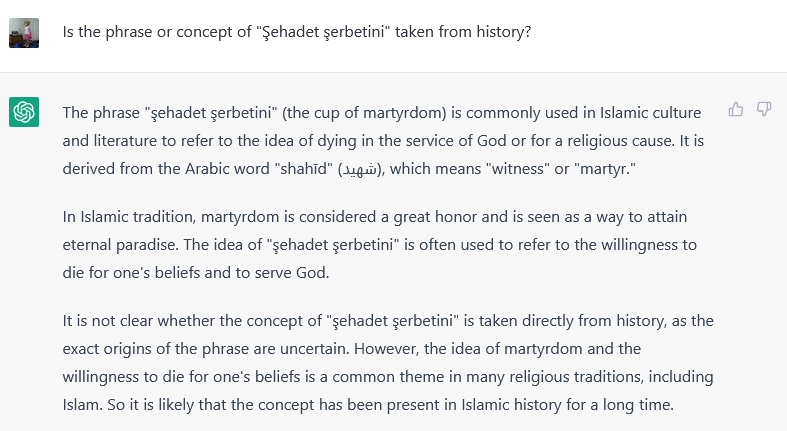

Even when I asked specifically if it knew of the rijal al-ghayb, ChatGPT wasn’t able to answer. OK, too difficult; how about this one instead:

First two paragraphs here: really nice answer for the martyrdom bit. The Arabic etymology (شهيد) seems correct, and its got a good bit of cultural explanation added in.

Third paragraph though, is incorrect; its pretty clear where this phrase comes from. The literal translation is “the Sherbet of Martyrdom”, but its not the fizzy Western sherbet, its the cold, sweet drink. The association with martyrdom comes from Imam Hussain, who was denied water before his death, but rewarded with sherbet in heaven. Shia Muslims celebrate his martyrdom in the Ashura festival.

If I stopped right there I would have to be very positive about the achievement that is ChatGPT. A good advance in machine translation, and a very usable alternative to Googling something for information, with reasonable accuracy when tested for more difficult historical knowledge. This was the good part of my chat with ChatGPT.

But I didn’t stop there. I managed to coax it off the beaten path and into the weeds, where it contradicted itself completely within a few paragraphs. For this story, you’ll have to see Part 3 of this post.

Kuruluş: Osman, 90 Bölüm; timestamp 24:53

Note that I cannot for the life of me understand a word that the Alps say here, so I will take Google’s transcription of “Hak” as truth.

This might not even be an error; weird stuff happens in Arabic with vowels and I don’t pretend to understand the rules.

These examples are hilarious and very much worth reading.

Lets pretend for a moment I deliberately and cleverly left an error in so I could test the machine on it. Smart move, that was.

I just checked both now (12 Dec 2022) to be sure.

Seems like in Turkish they might be called “gayb erenleri” (“those who are unseen”); the Arabic is transliterated into Turkish as “ricâlü’l-gayb”.